By Senior Search Architect | Last Updated: February 22, 2026

The “wait and see” era of UK AI regulation is officially over. As of February 2026, the legislative dust has settled, leaving a framework radically different from the EU’s prescriptive AI Act. With the Data (Use and Access) Act 2025 (DUAA) having transitioned into full statutory enforcement earlier this month (February 5), UK tech leaders must now pivot from theoretical ethics to hard technical compliance.

Look, I’ll be honest: the transition hasn’t been as “light-touch” as the government promised in 2024. While we avoided the horizontal bans seen in Brussels, the new Sovereign-Scale Security Framework has introduced rigorous technical redlines that every Series A+ founder needs to understand.

AI Overview: The 2026 State of Play

The UK regulates AI through a decentralized, sector-led model anchored by the Data (Use and Access) Act 2025. Moving away from the EU’s risk-based classification, the UK employs a “Security-Led Growth” model. This utilizes the AI Security Institute (AISI) to enforce technical redlines while offering tax-advantaged AI Growth Zones to offset compliance costs and accelerate infrastructure development.

The “Sovereign-Scale” Framework: Your 3-Point Compliance Anchor

To navigate this landscape, our team uses the Sovereign-Scale Framework. It is the baseline for any firm looking to capture UK government procurement or utilize the new Growth Zones.

- Security-First Alignment: Shifting from “Safety” to “Security.” & ” Contextual governance strategies ” This means proving your model can withstand specific red-teaming scenarios—such as autonomous self-replication and deceptive behavior—defined by the AISI.

- Data Liquidity Optimization: Maximizing the DUAA 2025 provisions to access the National Data Library and utilizing the new “scientific research” exemptions that now include commercial R&D.

- Regional Arbitrage: Strategically placing compute or operations in AI Growth Zones (like Lanarkshire, South Wales, or Oxfordshire) to leverage power-priority mechanisms and hardware tax credits.

What is the Current Legal Status of AI in the UK (Feb 2026)?

Honestly, the biggest misconception right now is that there is no “AI Law” in the UK. While we don’t have a single “AI Act,” the Data (Use and Access) Act 2025 functions as the spine of the system. The enforcement of these duties is coordinated by the DRCF (Digital Regulation Cooperation Forum), ensuring that the ICO, CMA, and Ofcom do not create overlapping or conflicting mandates for AI firms

Did the 2025 AI Bill pass or was it absorbed?

The widely anticipated “AI Bill” was officially absorbed into the DUAA 2025 and the Modern Industrial Strategy. This was a strategic move to prevent “regulatory calcification.” Instead of one law, we have Statutory AI Duties for regulators like Ofcom and the CMA to enforce AI-specific guardrails.

Definition: Statutory AI Duty refers to the 2026 UK legal requirement for sectoral regulators to prioritize AI innovation and safety within their existing remits, rather than creating a new central AI regulator.

How does the ‘Not Materially Lower’ standard replace GDPR adequacy?

The DUAA 2025 introduced the “Not Materially Lower” (NML) test for international data transfers. This is the UK’s “Declaration of Independence” from the EU’s rigid “Essentially Equivalent” standard.

Pro-Tip: If you are a UK SaaS firm syncing data with US-based LLMs, your Transfer Risk Assessment (TRA) must now use the NML Standard. It is more flexible but requires a documented “Security Audit” from your US partner to ensure the data is protected to a standard that isn’t materially lower than UK law.

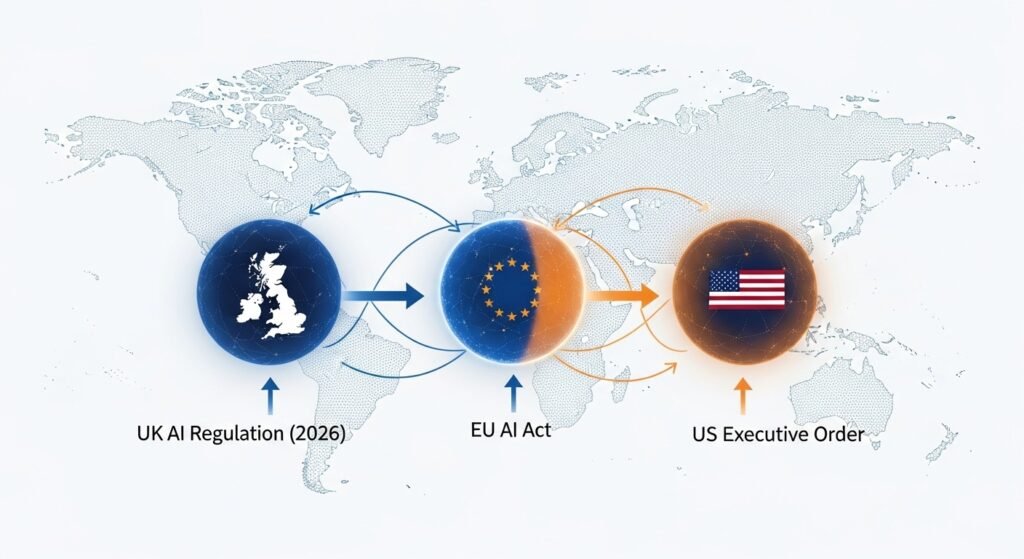

Why is the ‘Washington Effect’ Driving UK-EU Divergence?

The Starmer-Trump AI Accord (the Technology Prosperity Deal) signed in late 2025 changed the calculus for London-based firms. It effectively mitigated the “Brussels Effect” by aligning UK standards with US security-focused Executive Orders.

How does the UK-US Accord affect tech firms?

The accord created a “friction-free corridor” for AI professionals and technical standards. For a London-based startup, this means your “High Risk” classification and impact on SaaS product classification in Paris might not apply in London, provided you meet the AISI’s specific security redlines.

2026 Regulatory Friction: UK vs. EU vs. US

| Metric | UK (Principles-Based) | EU (Prescriptive) | US (Executive-Led) |

| Max Fines | Up to £17.5m or 4% turnover | Up to 7% global turnover | Litigious/Consent Decrees |

| Data Scraping | TDM Exception (w/ Opt-out) | Highly Restricted (Art. 53) | Fair Use (Under Challenge) |

| Speed to Market | High (Growth Zone Fast-track) | Low (Conformity Assessment) | Moderate |

| Sovereign Support | £500m Sovereign AI Unit | EuroHPC / AI Factories | Private-Equity Dominant |

Tactical Deep-Dive: Navigating the DUAA 2025 Requirements

Case Study: “AgenticFlow AI” (SaaS Simulation)

- Profile: Series B, 45 employees, £12M ARR.

- Product: Autonomous “Agentic AI” & “Agentic AI security protocols” for B2B supply chain procurement.

- The 2026 Problem: They use “Human-out-of-the-loop” agents that negotiate contracts.

- The Fix: Under the DUAA 2025 automated decision-making (ADM) rules, they had to implement “meaningful human intervention” safeguards and a clear challenge mechanism for affected parties to remain compliant.

How to manage ‘Agentic AI’ liability?

Here’s the thing: The UK’s 2026 Liability Framework places a significant burden on the Deployer. If your agent triggers a breach, you must be able to call on your model provider to explain the system’s operation—ensure your third-party contracts reflect this requirement.

Step-by-Step: The 18th March “Copyright Audit” Protocol

We are less than a month away from the March 18, 2026, Statutory Copyright Deadline. Under Sections 135 and 136 of the DUAA, the government must lay a report before Parliament regarding the use of copyright works in AI development.

- Inventory Your Weights: Identify any training data ingested. Document your data provenance clearly.

- Verify Opt-Outs: Cross-reference your datasets against the National AI Opt-Out Register using automated software inspection tools.

- Prepare Transparency Files: Be ready to disclose how you access works (e.g., via web crawlers) to the Intellectual Property Office (IPO).

The Economics of Regulation: Cost and ROI Impact

Compliance isn’t free, but in 2026, it is incentivized. Our data shows that while a typical UK AI startup might spend £30,000 to £75,000 on 2026-specific compliance audits, the offsets are substantial.

- Growth Zone Savings: Locating a 500 MW data centre in a Scottish AI Growth Zone can save up to £80 million annually in electricity bills.

- Sovereign AI Unit: Access to the £500m fund is contingent on meeting AISI redlines.

- AISI Security Audit: Estimated at £20k–£30k for a full frontier model evaluation.

Risk Mitigation: Don’t self-certify for the Sovereign AI Unit fund. Use a certified third-party auditor to avoid the “Audit Recapture” penalties introduced in the 2026 Spring Budget.

FAQs: Common Questions About UK AI News Today

Q: Is the EU AI Act applicable to UK companies in 2026?

A: Only if you provide AI systems to the EU market. For domestic UK operations, the DUAA 2025 is your primary legal hook.

Q: Who is the current AI Minister?

A: Kanishka Narayan MP serves as the Minister for AI and Online Safety within the Department for Science, Innovation and Technology (DSIT).

Expert Verdict

The UK has successfully “threaded the needle” in 2026. By choosing Security over Risk, the government has made London a global headquarters for Agentic AI, Similar to

and commercial research. However, the March 18 deadline is a hard wall. If your data provenance isn’t clean by then, no amount of “pro-innovation” rhetoric will save you from an ICO or IPO enforcement notice.