The AI gold rush of 2024 has officially hit the “Compliance Wall” of 2026. Enterprises that treated AI as a pure engineering challenge are currently stalled in “Pilot Purgatory,” while those treating it as a governance-first strategic pivot are capturing market share.

If your AI transformation is failing, it isn’t because your models aren’t “smart” enough. It’s because your organizational guardrails are still built for static software, not probabilistic intelligence.

Why is AI Transformation a Problem of Governance?

Snippet-Ready Definition: AI transformation is a governance problem because scaling non-deterministic systems requires continuous operational controls, real-time risk mitigation, and clear accountability frameworks. Unlike traditional IT, AI behaviors evolve post-deployment, making “set-and-forget” policies a recipe for catastrophic legal and operational failure.

In 2026, the complexity of Agentic AI—autonomous systems that can execute workflows without a human-in-the-loop—has made legacy data policies obsolete. Transformation fails when the “Can we build it?” (Engineering) outpaces the “Should we deploy it?” (Governance).

The A.E.G.I.S. Protocol: A Proprietary Framework

To solve this, we implement the A.E.G.I.S. Protocol (AI Enterprise Governance & Intelligent Scaling):

- Assessment of Autonomy: Defining the “Blast Radius” of every agentic workflow.

- Enforcement of Guardrails: Hard-coding ethical and operational limits into the MLOps pipeline.

- Governance at Runtime: Real-time monitoring for Model Drift and hallucinations.

- Integrated Audit Trails:Automated logging using robust account audit software for EU AI Act and NIST AI RMF compliance.

- Strategic Alignment: Ensuring AI outcomes map directly to ARR and shareholder value.

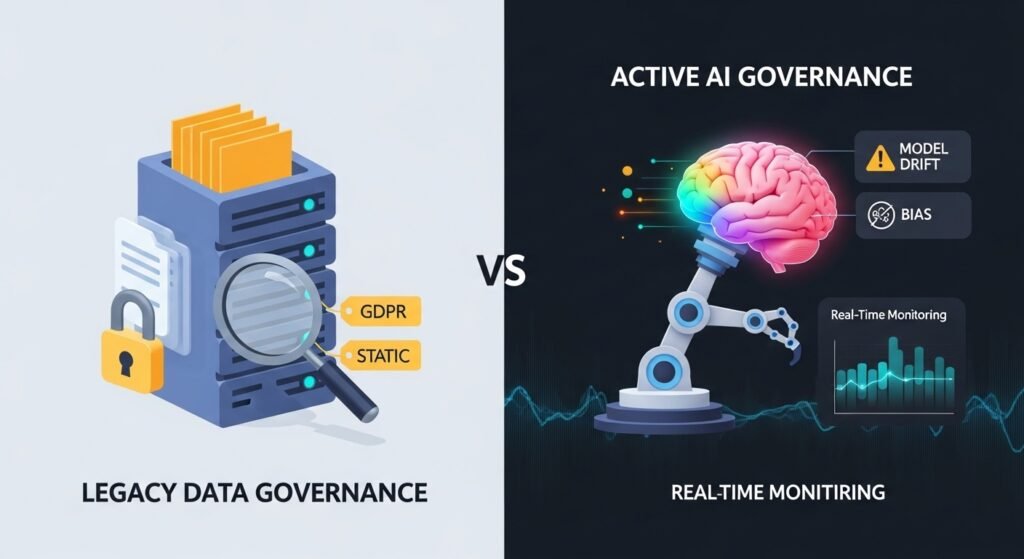

Data Governance vs. AI Governance: The Critical Split

Many CTOs mistakenly believe their existing Data Governance covers AI. It doesn’t.

| Feature | Static IT Governance | Active AI Governance (2026) |

| Logic Type | Deterministic (If/Then) | Probabilistic (Weights/Biases) |

| Primary Risk | Unauthorized Access | Model Drift & Hallucination |

| Audit Frequency | Annual/Quarterly | Continuous/Real-time |

| Regulatory Focus | GDPR / CCPA | EU AI Act / NIST AI RMF |

Information Gain Insight: Most frameworks focus on Input (training data). However, in 2026, the real risk lies in Recursive Feedback Loops, where AI-generated content is re-ingested by the model, leading to [“Model Collapse.”] Your governance must audit the Self-Generation cycle.

Pros & Cons: Centralized AI Oversight

| Pros (Accelerators) | Cons (Friction Points) |

|---|---|

| Legal Certainty: Unblocks stalled C-suite approvals. | Initial Latency: Setup phase can slow early pilots. |

| Brand Protection: Prevents public-facing hallucinations. | Cost: Requires specialized AI Safety personnel. |

| Scale: Enables “Paved Roads” for rapid dev deployment. | Complexity: Hard to govern “Black Box” models. |

SaaS Case Simulation: The $50M ARR Turnaround

Company: CloudFlow (B2B Project Management SaaS)

- The Problem: Attempted to launch an “Auto-Pilot” feature that assigned tasks and budgets autonomously. Legal blocked the launch for 8 months due to “unquantifiable risk.”

- The Intervention: Applied the A.E.G.I.S. Protocol. Defined a “Human-on-the-Loop” trigger for any budget allocation over $500.

- The Result: * Time-to-Market: Reduced from 14 months (projected) to 4 months.

- ARR Impact: Contributed to a 12% expansion in NRR (Net Retention Rate) within two quarters.

- Risk Mitigation: Caught 14 potential “Budget Spirals” via automated runtime guardrails.

5 Steps to Operationalize Your 2026 AI Roadmap

- Inventory “Shadow AI”: Identify every unsanctioned LLM API currently being used by your developers. [Link to Shadow AI Audit Guide]

- Map Decision Rights: Explicitly state who is legally liable when an autonomous agent makes a $10k error.

- Deploy Runtime Monitoring: Integrate tools that flag Sentiment Shift or Prompt Injection attempts in real-time. [Reference: NIST AI RMF Guidelines]

- Build “AI Paved Roads”: Create pre-approved templates for low-risk use cases (e.g., internal documentation) to speed up dev cycles.

- Continuous Validation: Treat AI as a “living employee.” Conduct quarterly “Performance Reviews” on model accuracy vs. business goals.

Pro Tip: Don’t let your legal team write your AI policy in a vacuum. If a policy adds more than 20% friction to the dev cycle, your engineers will find a workaround, creating more risk.

The Cost of Governance vs. The ROI of Speed

Estimated Costs (Enterprise Scale):

- Software (Monitoring/Audit Tools): $40k–$150k / year.

- Personnel (AI Safety Officer/Compliance Lead): $180k–$250k / year.

The ROI Math:

- Prevention of Fines: Avoiding a single EU AI Act violation (up to 7% of global turnover).

- Innovation Velocity: Companies with a clear governance framework deploy AI features 3.4x faster because the “Security/Legal hurdle” is standardized.

- Valuation Premium: In 2026, VC and PE firms apply a “Governance Discount” to startups with messy AI implementations.

Risk Mitigation: Preventing the “Agentic Spiral”

As we move toward Agentic AI, the greatest risk is Cascading Failure—where one AI agent’s error triggers a chain reaction in another.

The Solution: Implement a Circuit Breaker Pattern. Just like electrical grids, your AI governance should automatically disconnect an autonomous agent if its confidence score drops below a specific threshold (e.g., 85%).

Expert Verdict

“Transformation is not a technology purchase; it is a cultural and structural evolution. If you are waiting for the ‘perfect model’ to solve your problems, you’ve already lost. The winners of 2026 are those who mastered the accountability layer.”

A: It must be a triad: The CTO (Tech), the General Counsel (Risk), and a Business Lead (ROI).

A: Legally, no. Strategically, yes. It is the global language of “Safe AI.” [Link to NIST Official Site]

A: Use automated MLOps triggers that alert your team when the model’s output variance exceeds 5% from its baseline training.